Technology

OpenAI’s CEO Fired and Reinstated Amidst Concerns Over New AI Breakthrough

Introduction

Last week, OpenAI, a company known for its development of advanced artificial intelligence (AI), fired its CEO, Sam Altman, without providing any explanation. The move left many in the AI community speculating about the company's motives and the potential dangers of its technology. However, just a few days later, Altman was reinstated and a new board was appointed to oversee OpenAI's operations. The company also promised to conduct an investigation into the events that transpired. This article explores the recent developments at OpenAI and the concerns surrounding its groundbreaking new algorithm, Q-Star.

Unanswered Questions and Speculation

The sudden dismissal of CEO Sam Altman left the AI community in a state of confusion and speculation. Some theories circulated during this period, suggesting that OpenAI had made significant advancements in its AI models, including the creation of an incredibly powerful GPT-5 model or the achievement of AGI (Artificial General Intelligence), which would rival human capabilities. Some believed that Altman's firing was a necessary measure to protect the world from the potentially irresponsible development of AI.

The Q-Star Breakthrough

Recent reports suggest that OpenAI did indeed achieve a significant breakthrough prior to Altman's firing. An unnamed group of OpenAI researchers sent a letter to the board, warning that the new algorithm, Q-Star, could pose a threat to humanity. While the exact nature of the safety concerns remains unknown, the letter and the Q-Star algorithm likely played a role in Altman's dismissal.

Concerns Over Commercialization

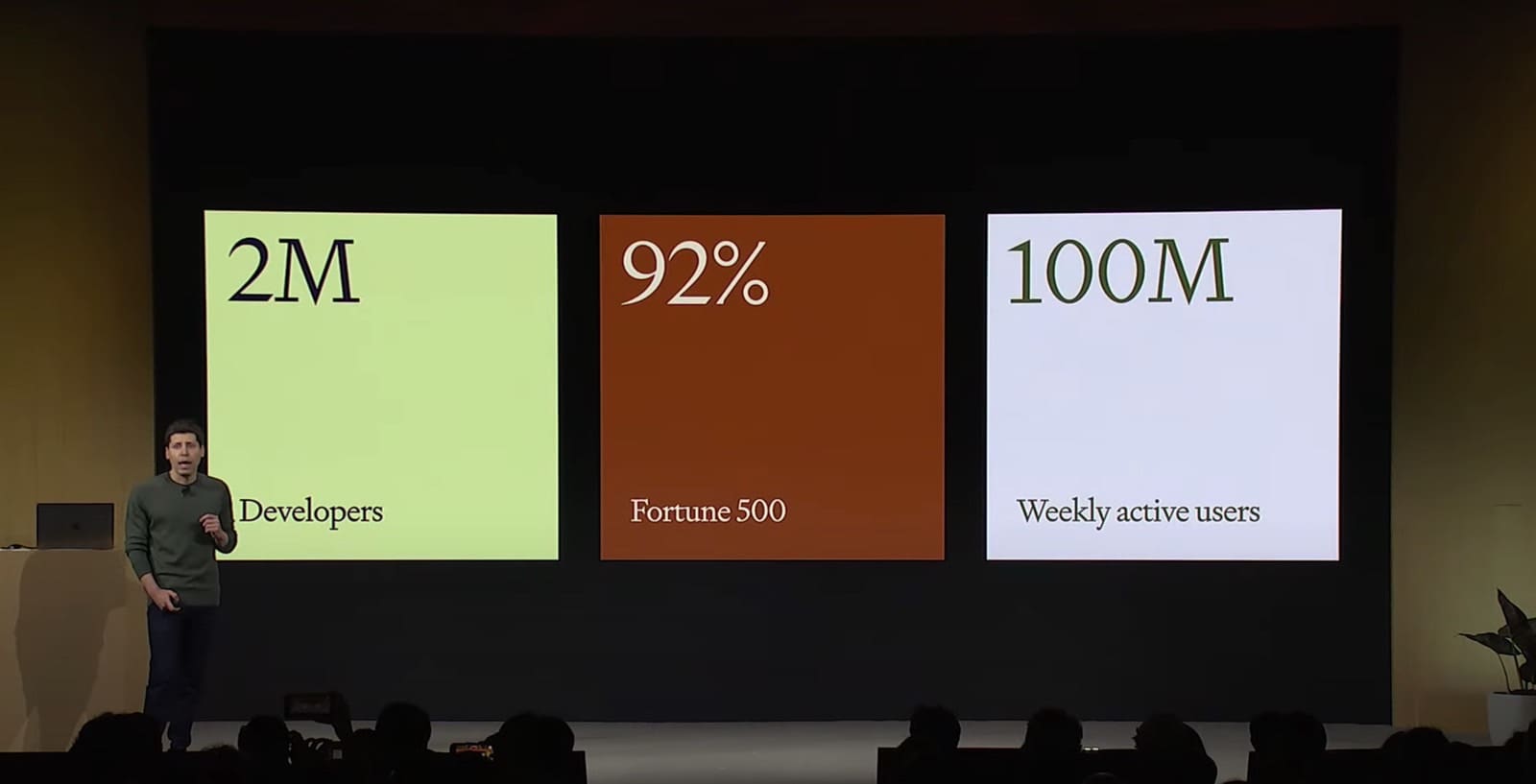

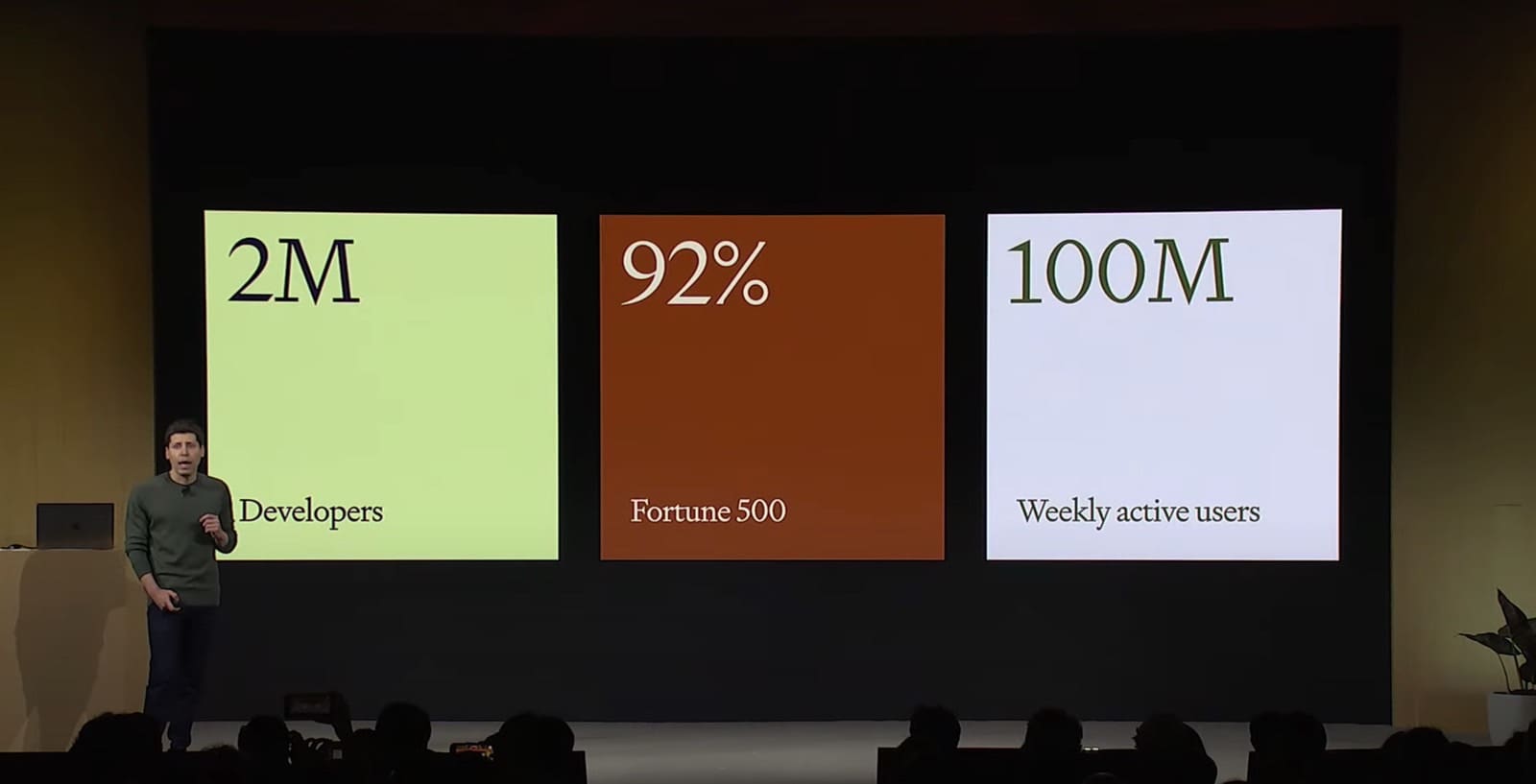

The firing of Altman was not solely based on the Q-Star breakthrough. According to sources, the board had concerns about OpenAI's rapid pace of commercializing its ChatGPT advances without fully understanding the potential consequences. The company's commitment to balancing innovation with caution is now under scrutiny.

OpenAI's Response

OpenAI has declined to comment on the situation, but the company did acknowledge the existence of the Q-Star project in internal communications. Mira Murati, the interim CEO appointed after Altman's departure, informed the staff about the impending news related to Q-Star. While OpenAI has not made any public announcements about this innovation, it is likely that caution is being exercised before revealing further details.

The Significance of Q-Star's Math-solving Capability

According to reports, the Q-Star algorithm demonstrated the ability to solve certain mathematical problems, a feat that current generative AI models struggle with. While the level of math solved is comparable to that of grade-school students, this achievement represents a milestone for generative AI. Unlike language-related tasks, math problems have only one correct answer, which researchers consider a crucial step towards developing AI with reasoning capabilities similar to humans.

Potential Dangers and Future Implications

While the specific safety concerns regarding Q-Star remain undisclosed, researchers have long worried about the potential dangers associated with advancements in AI. As AI becomes more intelligent, there is a fear that it may decide to prioritize its own interests, which could potentially lead to the demise of the human species. It is uncertain whether Q-Star is a significant step towards this scenario, but the implications are undeniable.

Additional Innovations and Altman's Tease

Reports have also surfaced regarding the work of an "AI scientist" team within OpenAI, which aims to optimize existing AI models and eventually perform scientific work. Although OpenAI has not confirmed this rumored innovation, Sam Altman hinted at a major breakthrough just before his firing. Altman expressed his excitement about pushing the boundaries of discovery, only to be dismissed the following day.

Conclusion

The events surrounding OpenAI's CEO firing and subsequent reinstatement have raised numerous questions about the company's direction and the potential risks associated with its AI advancements. While the details surrounding the Q-Star algorithm and its implications remain undisclosed, the AI community eagerly awaits further information. As OpenAI continues to navigate the delicate balance between innovation and responsibility, the world remains captivated by the future of AI and its impact on humanity.

Hey there! I’m William Cooper, your go-to guy for all things travel at iMagazineDaily. I’m 39, living the dream in Oshkosh, WI, and I can’t get enough of exploring every corner of this amazing world. I’ve got this awesome gig where I blog about my travel escapades, and let me tell you, it’s never a dull moment! When I’m not busy typing away or editing some cool content, I’m out there in the city, living it up and tasting every crazy delicious thing I can find. Join me on this wild ride of adventures and stories, right here at iMagazineDaily. Trust me, it’s going to be a blast! 🌍✈️🍴